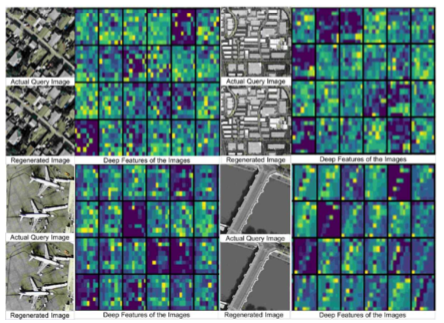

This project involves matching based image retrieval and localization. The query and target images could of same view i.e. both ground images or both satellite images or cross view where query could be ground images and target would be satellite image and vice versa. I started this work during my MS Thesis and developed an unsupervised discriminative autoencoder based modular framework for image retrieval that outperforms the other methods. The proposed approach addresses the problem of diminishing feature reuse, one of the inherent flaws in deep ResNet. Later, we collected our cross-view dataset containing 43 classes. In order to map the satellite images and ground images into a common space, we explore the cross-view generation techniques including pix2pix, conditional GAN. However, due to unbounded set of variations in both views, the generation is arbitrary which finally impairs the retrieval. For a baseline study, we compared the deep supervised features for retrieval using different similarity measurements and discriminator models. The visualization of the features drives us to define meta-class to learn super clusters that would further improve the separation in similar types of scene categories.

|

Archives

August 2019

Categories

|

| Mohbat Tharani |

|

RSS Feed

RSS Feed